As reported in the previous few posts, my 10 GbE QNAP NAS boxes fail to achieve wire speed even with 4 SSD striped arrays. I’ve been suspecting the processing power of the NAS’s is the problem. They’re fine for backup, but I don’t think I’d like to map them into a local drive appearance and run apps directly against them.

Background: As also reported here, I have an OWC 32 TB SSD 8-way striped Thunderbolt 4 array, that uses SoftRAID as the array controller. I’ve had problems with the SoftRAID licensing; it forgets that it’s already licensed, throws up an error message, and won’t let me enter a license code. In addition, it randomly drops the Thunderbolt 4 connection, so that SoftRAID can’t see the box. Sometime the Windows disk management software can see the individual disks, and sometime it can’t.

I don’t trust it any more, and, in spite of the fact that I’ve got $5K tied up in it, I want to abandon it.

There is a possible solution to both issues: the QNAP TVS-h874T. It’s a 10-disk box. It has two M.2 slots, which I’m not using at present. I do have some 8 TB M.2 SSDs in a OWC 4M2 box that has proven flakey, and I may take two of them and put them in the QNAP NAS. The TVS-h874T’s eight main storage bays are for 2.5 inch or 3.5 inch SATA drives. I loaded them with 8 SanDisk 4TB 3D SSDs, for a raw capacity of 32 TB.

The networking capabilities of the TVS-h874T are varied. It comes with two 2.5 GbE copper ports. It has a slot for faster GbE ports that supports up to dual 25 GbE SFP+ ports. And it has two Thunderbolt 4 ports. It also has a hefty processor: an Intel Core i9 16-core chip with 64 GB of DRAM. I was hoping that would give me good performance when used with programs that directly access it over Thunderbolt 4.

I configured the drive as an 8-disk RAID 5 array, with no hot spare. I had abandoned RAID 5, because as spinning rust disks got bigger and bigger and access times stayed the same, rebuild times got longer and longer, and the chances of another error during a RAID 5 rebuild grew uncomfortably larger. But SSDs are causing me to take new look at RAID5. The SSDs reduce the chances of failure in the first place. The SSDs are in general smaller than the spinners.

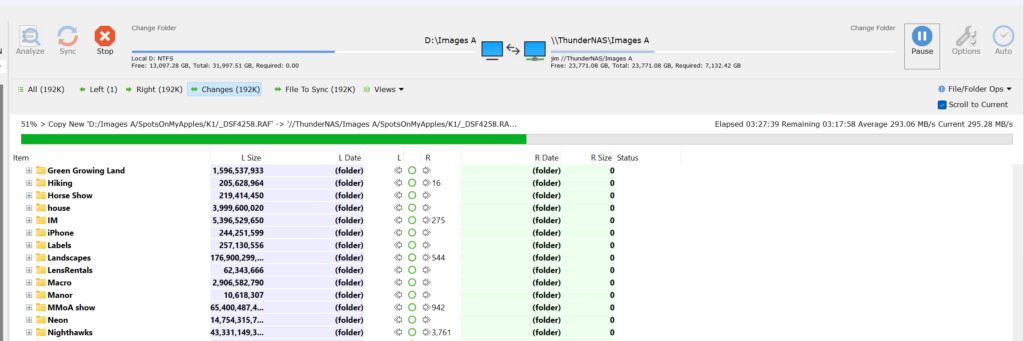

Figuring that the fast processor could handle it, I set up the array for compressed storage. That turned out to reduce my data footprint by about a third. I loaded the array over the 2.5 GbE port from my main workstation’s 32 TB PCIe SSD array. I got essentially wire speed.

To check compatibility with the SSDs, I transferred about 17 TB of data. No problem.

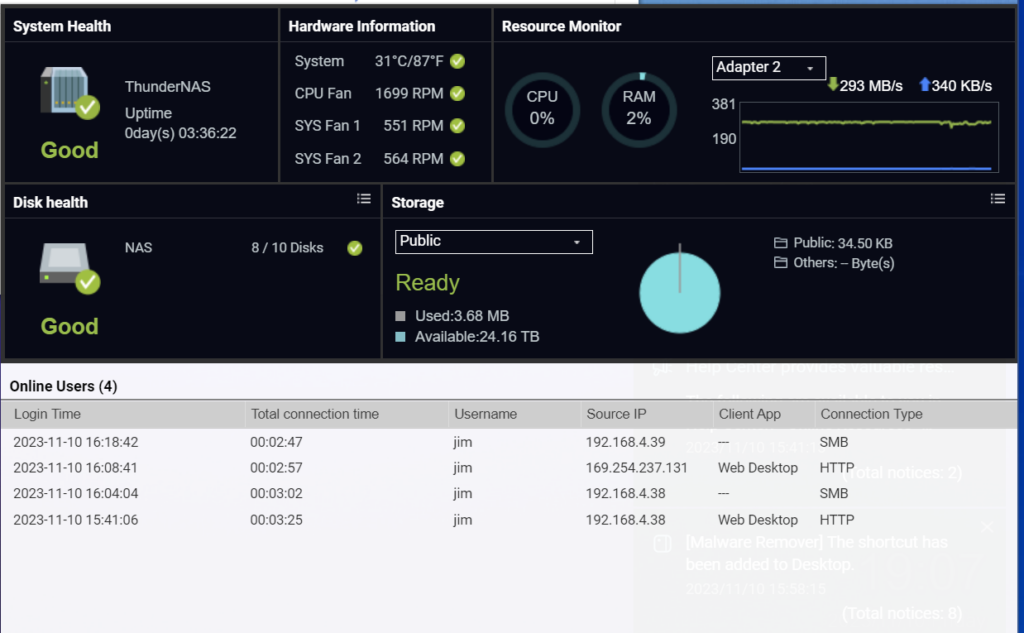

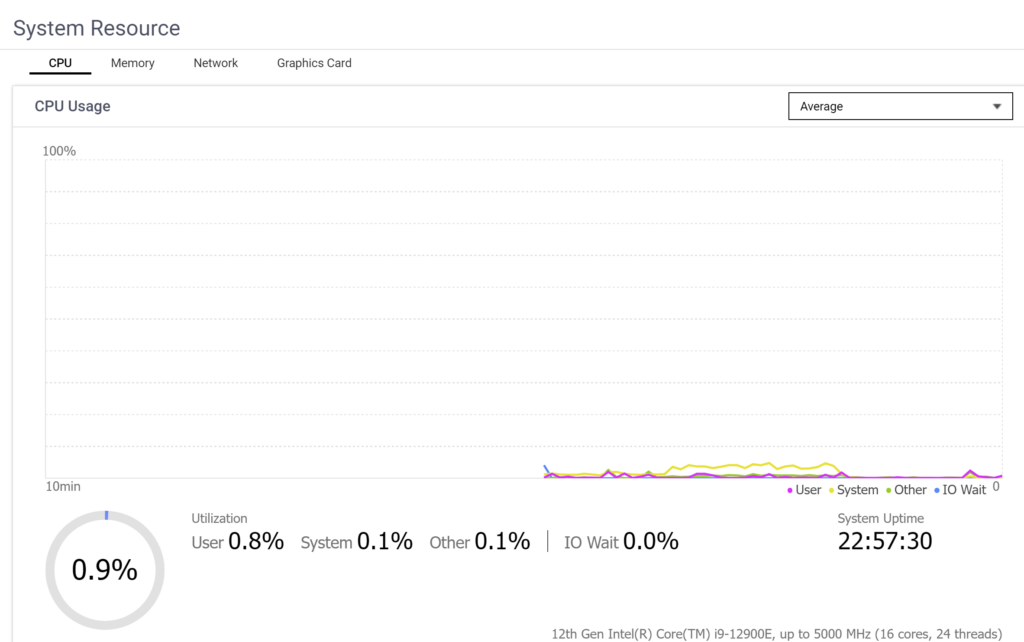

The CPU load during the big data transfer was reassuringly low.

The unit stayed quiet during the big write, and the temps remained under control. The big fans didn’t speed up at all.

I connected my main laptop to the NAS with Thunderbolt 4. I configured the OS to use it. It was very easy; the NAS defaulted to the right configuration when I went into the virtual switch window.

But the data transfer rate was about 2.5 Gb/s. I figured it was using the Ethernet port.

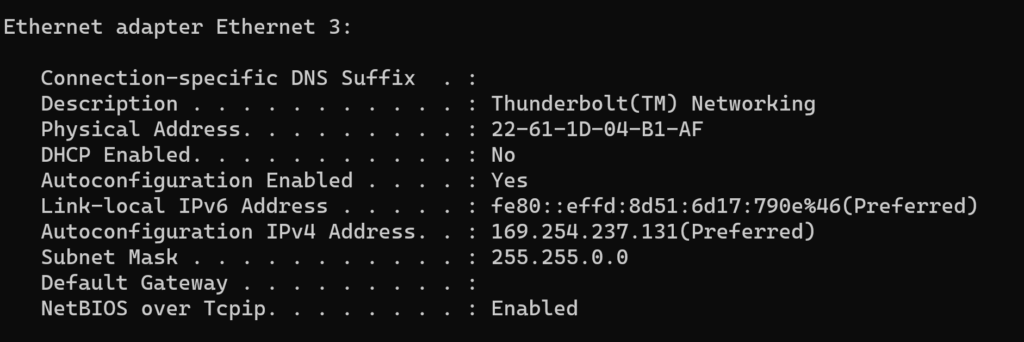

I ran ipconfig and the laptop was seeing the connection, and had taken on an IP address. But the subnet mask was much bigger than that for the LAN:

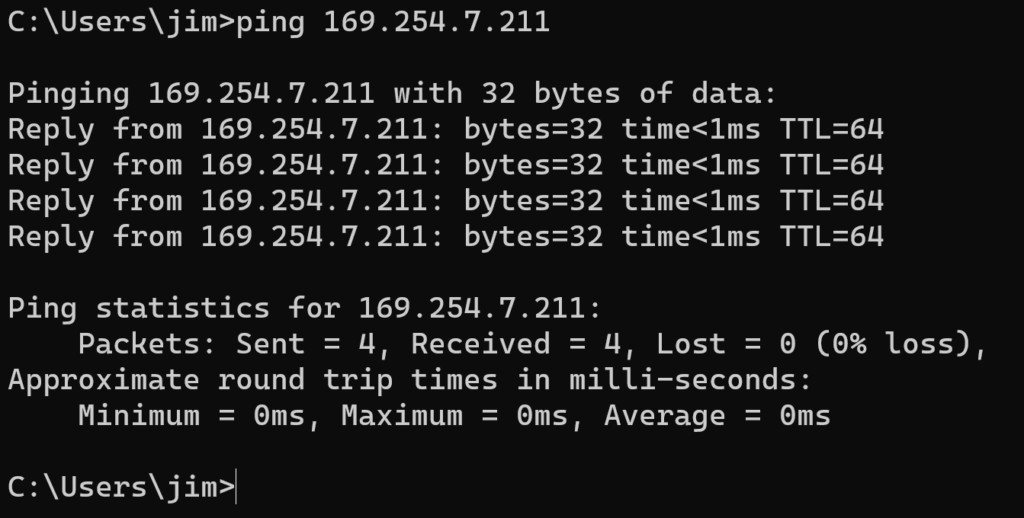

I could ping the NAS over Thunderbolt if I typed in the IP address and bypassed DNS:

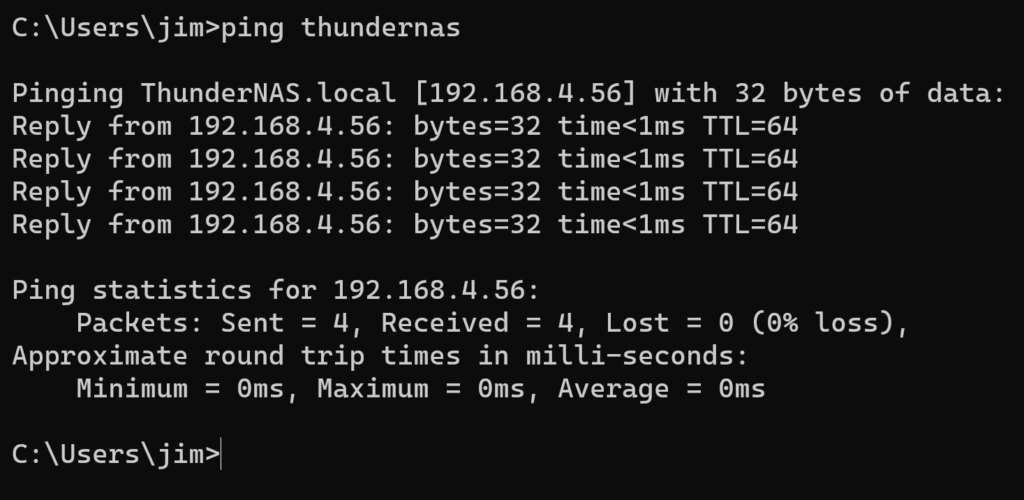

But if I pinged the NAS by name, the DNS resolved it to a LAN connection:

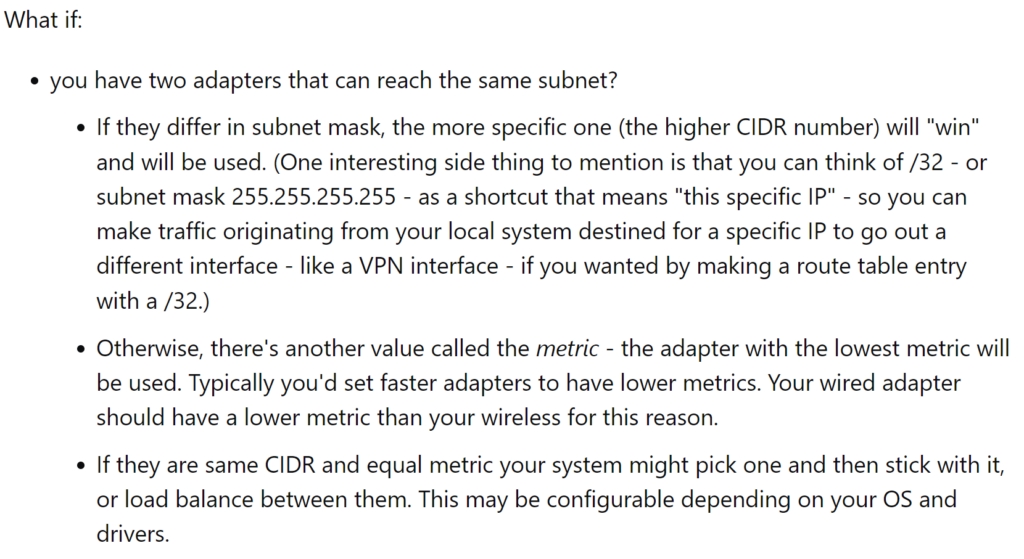

I found some ideas on the web:

But I couldn’t figure out how to reconfigure the DHCP server in the NAS.

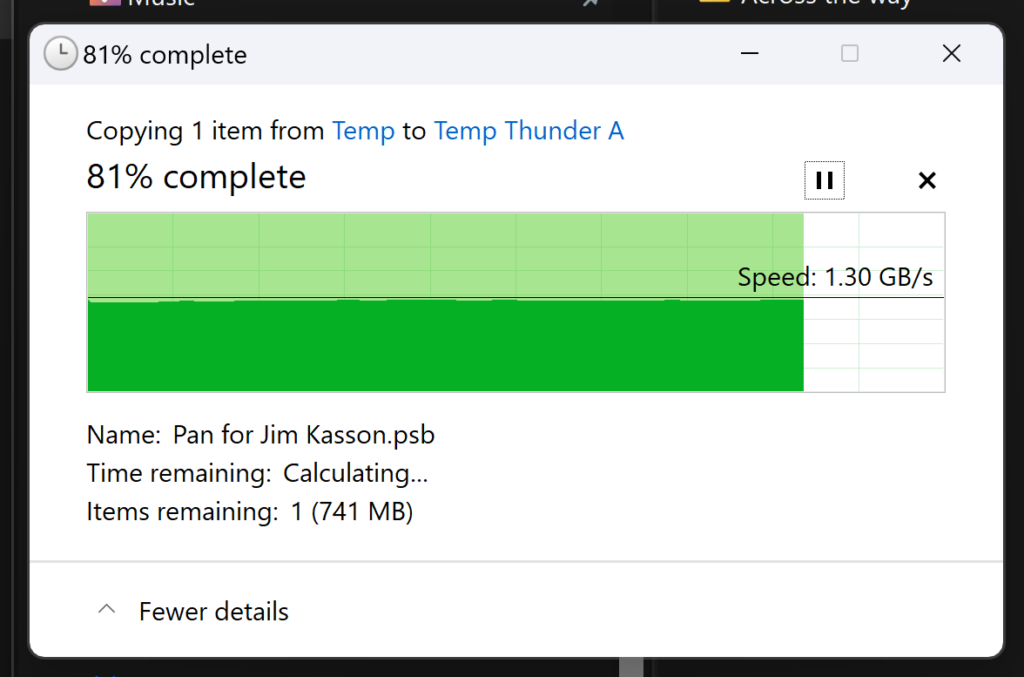

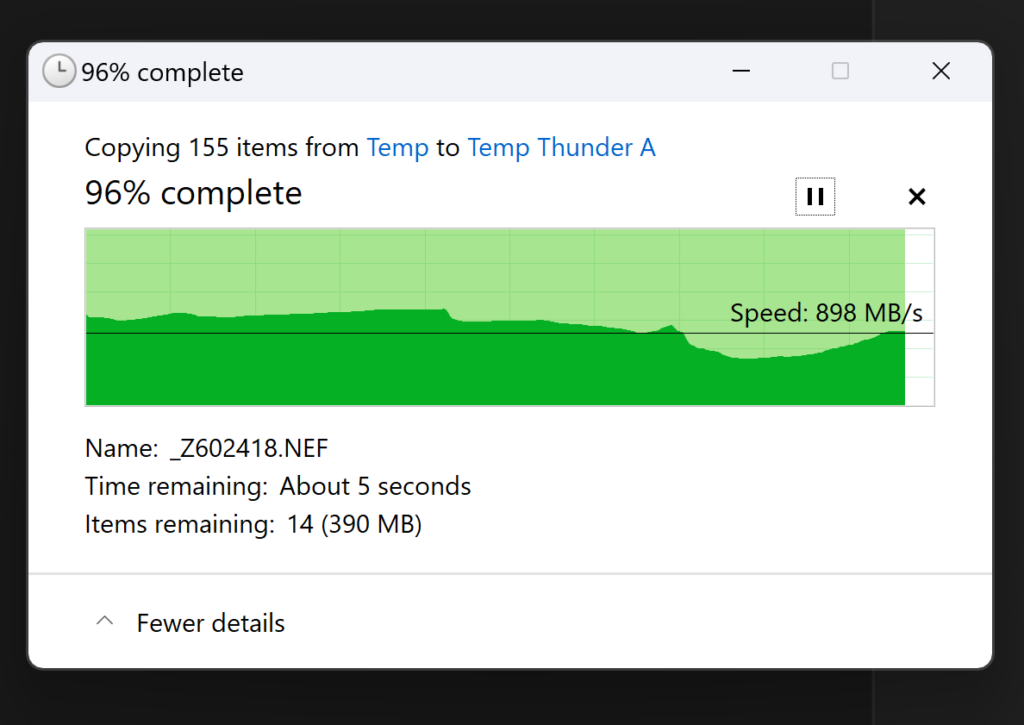

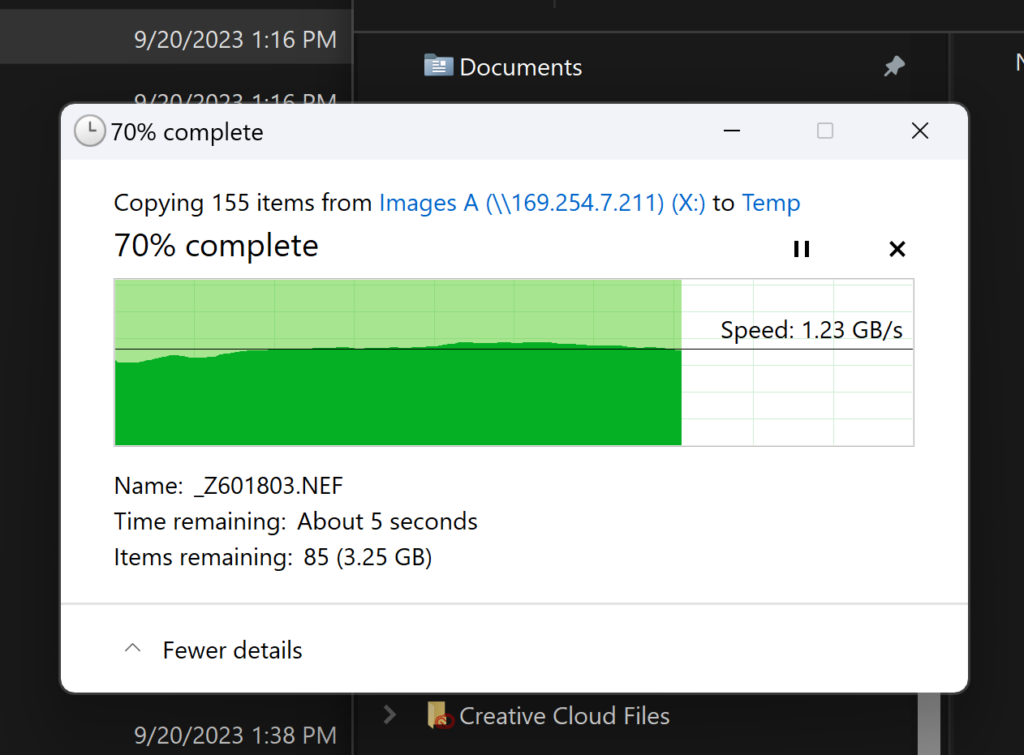

While I was floundering around, I found a Mount window, and mounted the NAS as a storage device under Windows. I network mapped one of the shared folders to a Windows drive (X:). Then I transferred a 4 GB file both from the computer’s SSD to the NAS (let’s call that the up direction), and from the NAS to the laptop’s SSD (the down direction).

That’s as fast as I got with the OWC Thunderbolt 4 box.

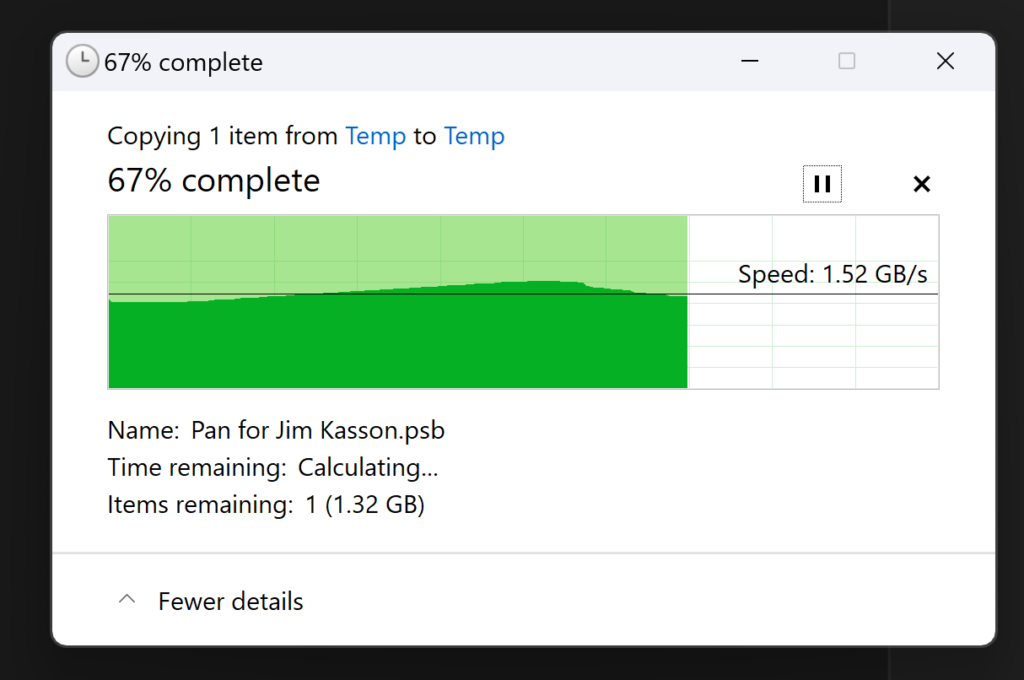

Somewhat smaller files are a little slower:

CPU load remained low:

So far, so good.

Leave a Reply