I got the Thunderbolt-attached QNAP TVS-h874T upgraded to 25/10 GbE networking and installed two M.2 8 TB drives. See the previous post for details. Now it’s time to do some performance testing. A practical way to measure system-level NAS performance is to copy files of varying length from the NAS to a local drive. I’ve been doing that, but you can get more consistent results if you take the local drive’s performance out of the picture. I’ve been using a tool provided by ATTO to measure local drive performance, but it doesn’t work on network drives.

But there’s a workaround. First map the NAS to a drive letter in Windows.

Then edit the registry:

- Click Start, type regedit in the Start Search box, and then press ENTER.

- Locate and then right-click the following registry subkey:

HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\Windows\CurrentVersion\Policies\System

- Point to New, and then click DWORD Value.

- Type EnableLinkedConnections, and then press ENTER.

- Right-click EnableLinkedConnections, and then click Modify.

- In the Value data box, type 1, and then click OK.

- Exit Registry Editor, and then restart the computer.

Now you can use ATTO on the mapped network drive.

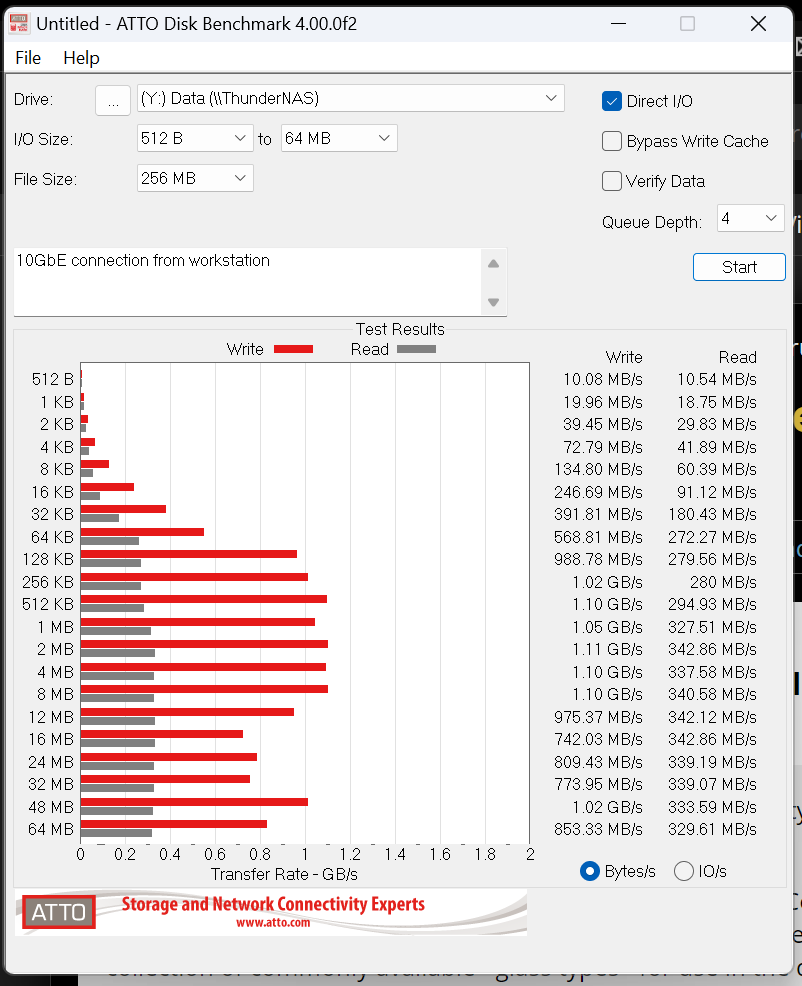

Results with the Thunderbolt-attached NAS, which I’ve named ThunderNAS.

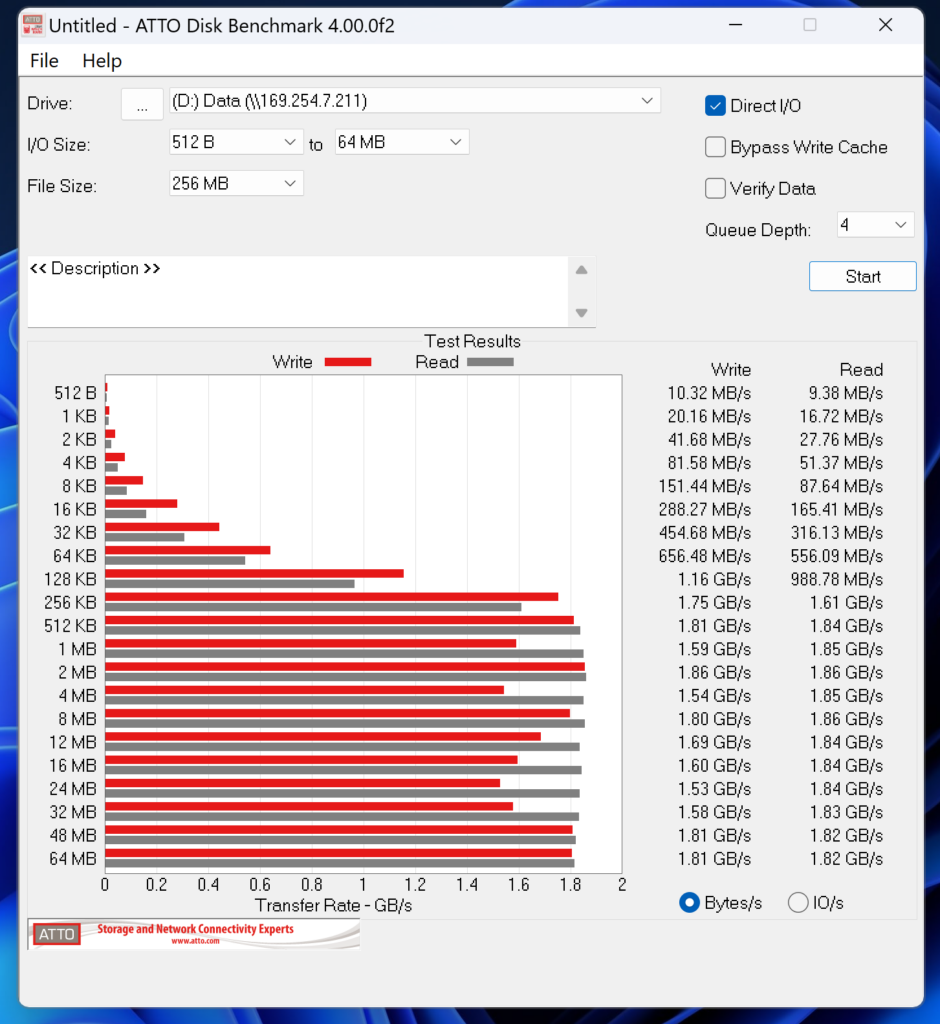

First for the RAID 5 (7/1) array of 4 TB SanDisk SATA drives:

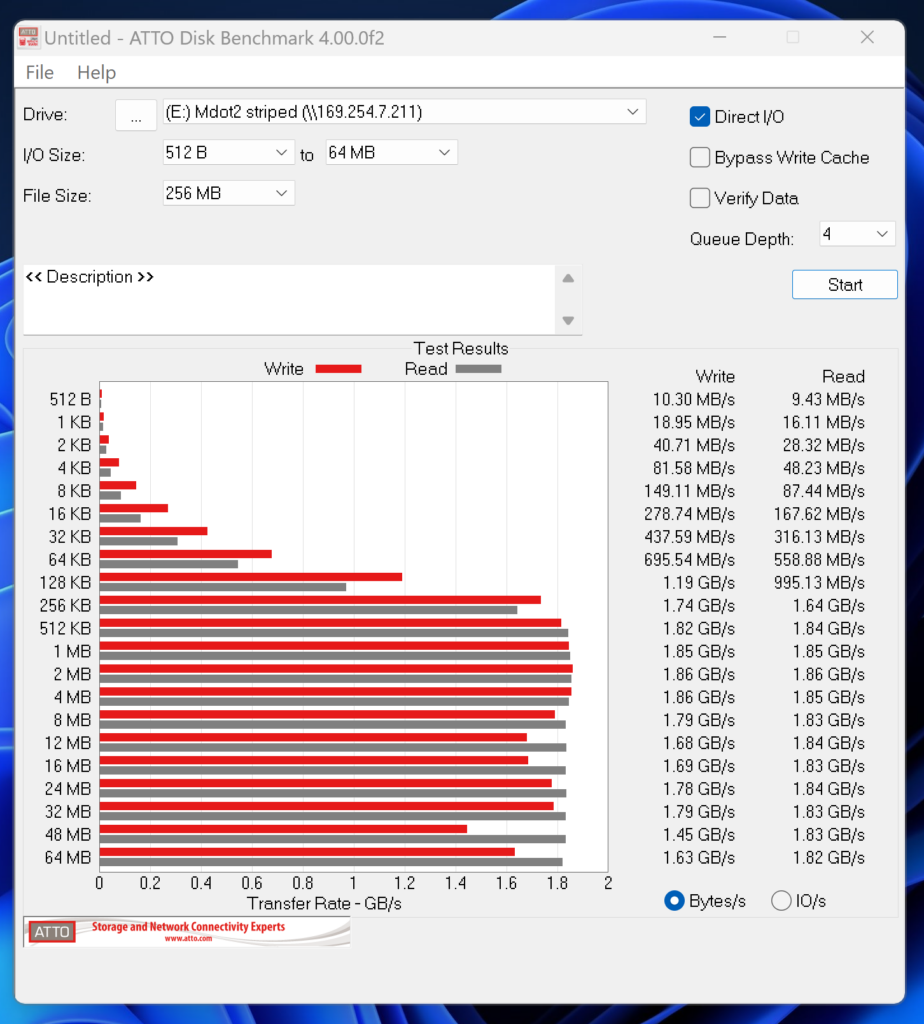

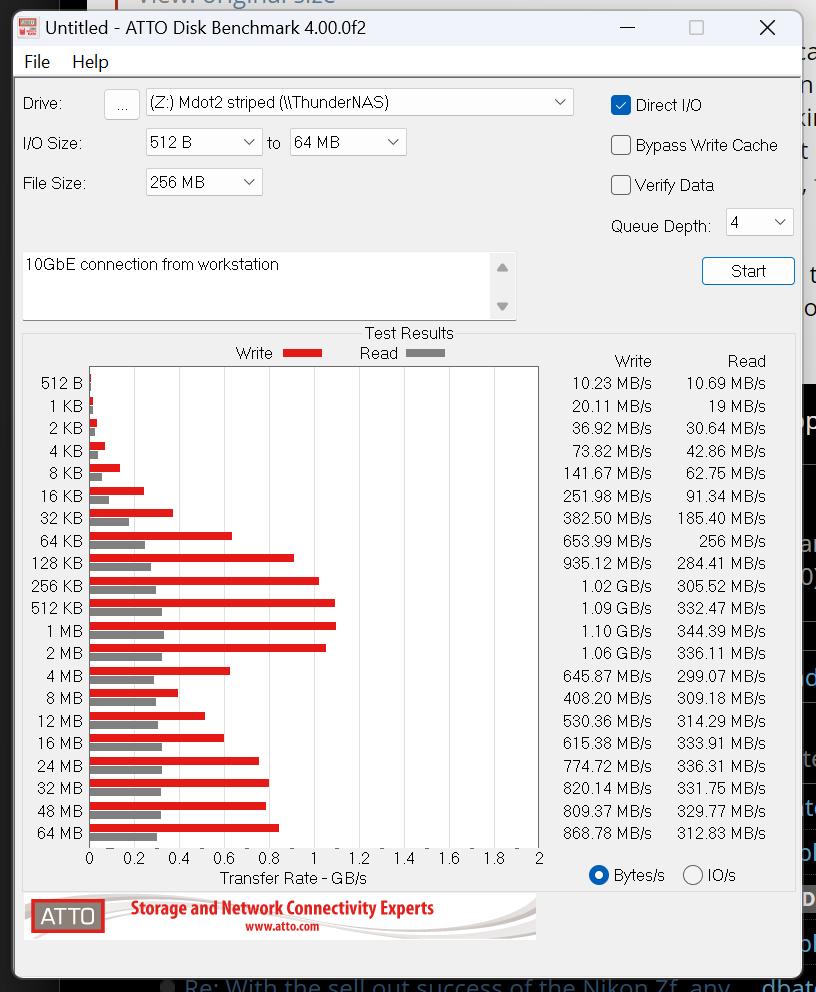

And now for a 2 drive striped array of 8 TB Crucial M.2 SSDs:

There is no advantage to using the faster drives in this way in this application. Maybe there would be if I could get more than two M.2 drives in the NAS, but I can’t get more than two in this one.

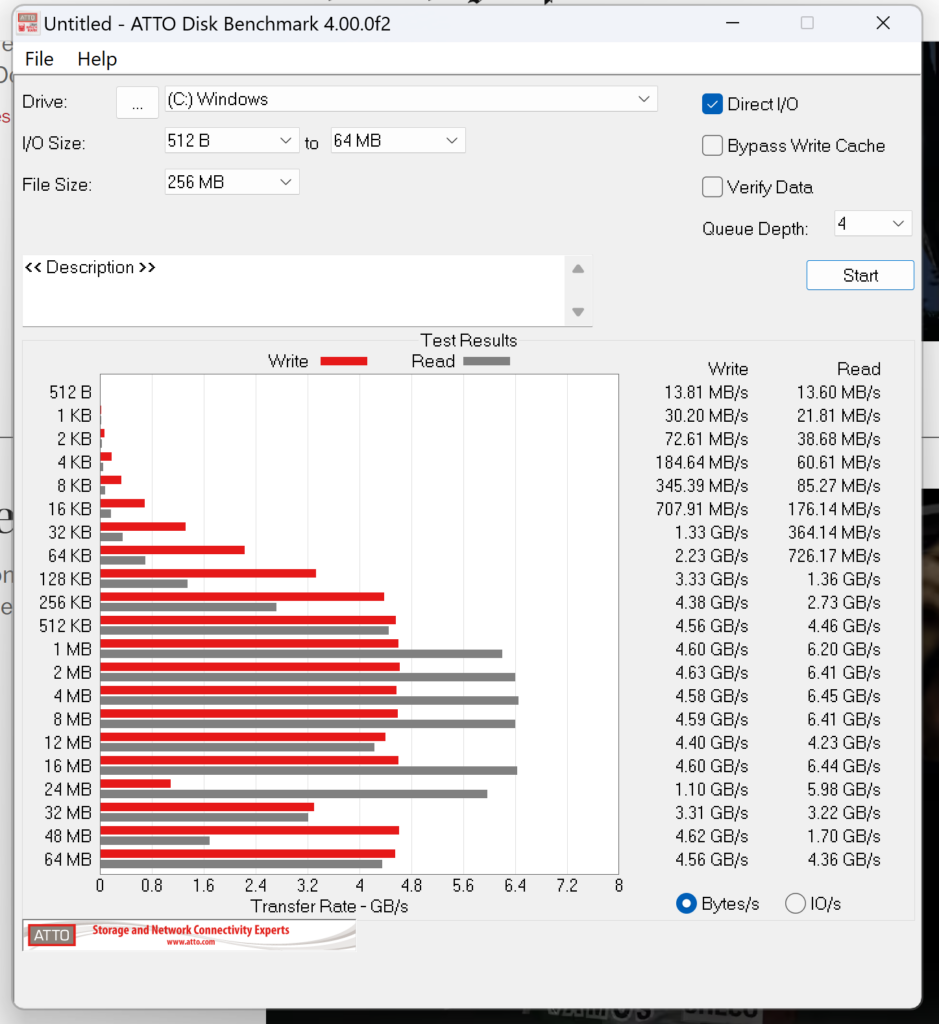

Here’s the performance of the local SSD in my Lenovo T16 laptop:

More than twice as fast.

Now let’s look at performance of the 10 GbE connection to the same box. I ran the benchmark program from my main workstation. First the RAID 5 array.

We’re achieving close to wire speed from writes, but roughly a third of that for reads.

Looking at the M.2 striped array:

Pretty much the same story.

The maximum packet size for both the NAS and the adapter was set at 1500 bytes, which is the highest supported by the IEEE standard. Raising that to 9000 would probably help, but I’m worried about some devices on my LAN not being able to deal with those long packets and fear that debugging that may take a long time, so I’m probably not going to try that.

As long as we’re testing performance, let’s take a look at the two other QNAP NAS’s.

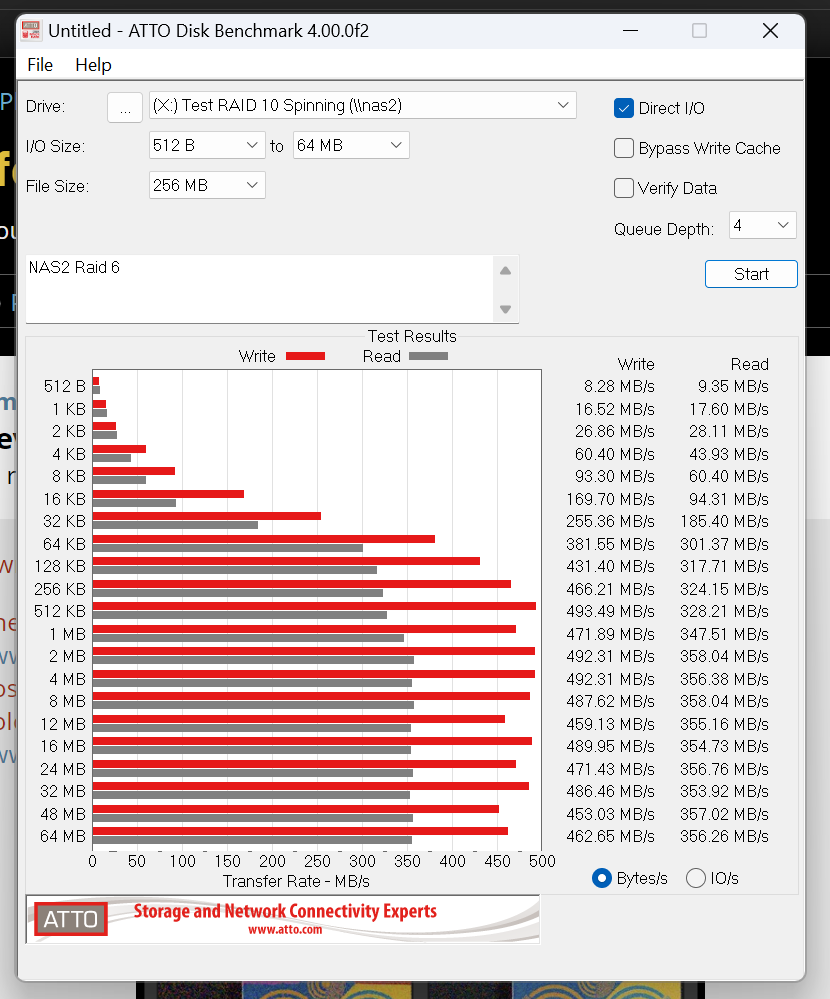

The RAID 6 spinning rust array:

Same read rates. Half the write rates.

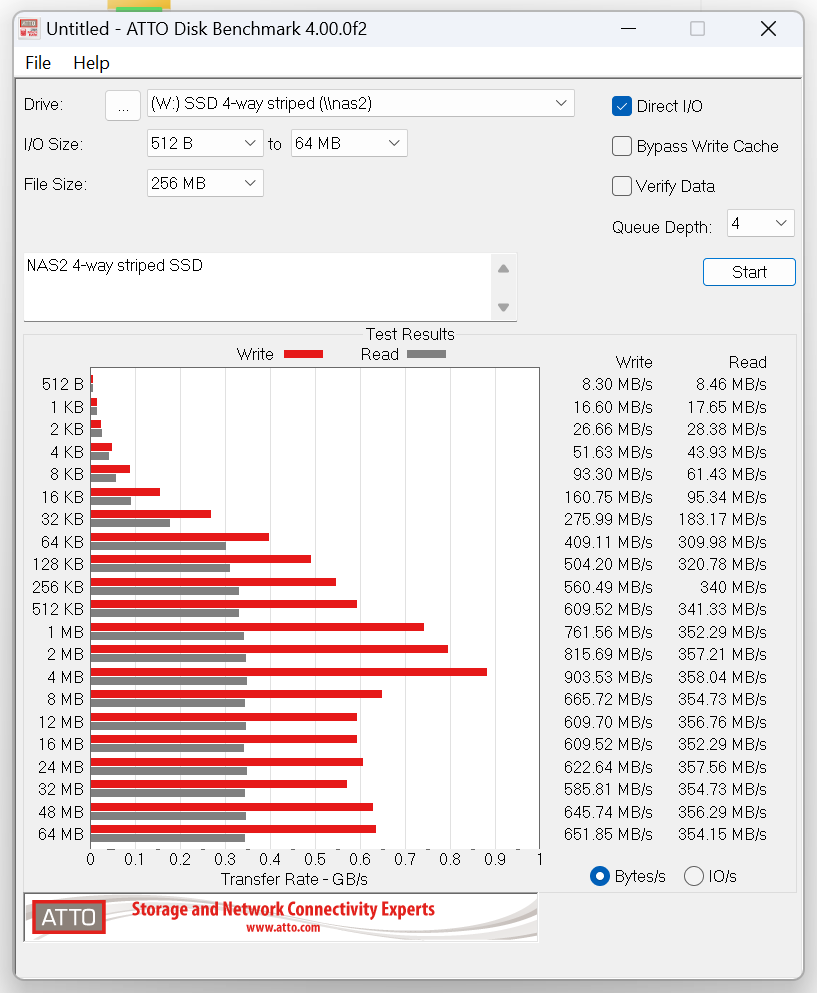

Looking at the 4-way striped SSD array in that NAS.

Writes have gone up a bit. Reads are unaffected.

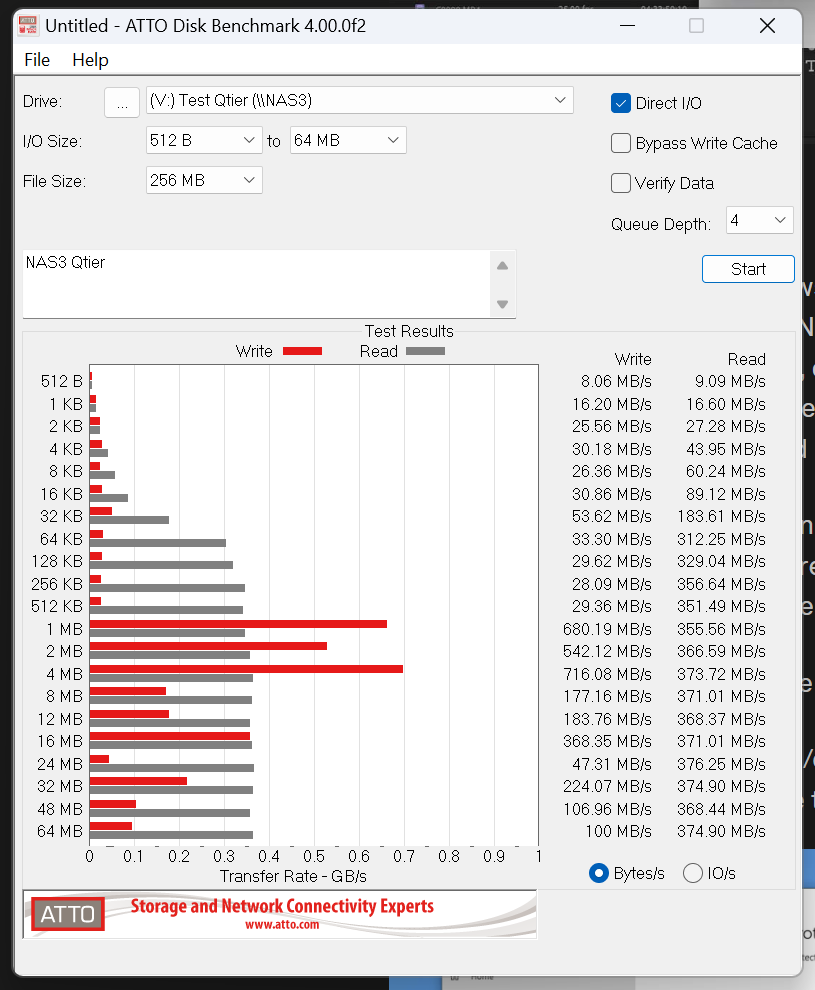

Testing NAS3, which has the RAID 6 spinning array and 4 4TB SSDs in a RAID 10 configuration with QNAP’s Qtier option enabled.

In general, same read performance, lower write performance for large transfers. The write caching doesn’t require that the data is already in the SSD’s, so this low level of write performance is a surprise. I’m going to take the tiering away from this array.

Is the QNAP model number correct? I couldn’t find an TVX items on their website or in Google but I did have numerous hits for TVS-h874T, searching for “QNAP h874T”.

Fixed now. Thanks.